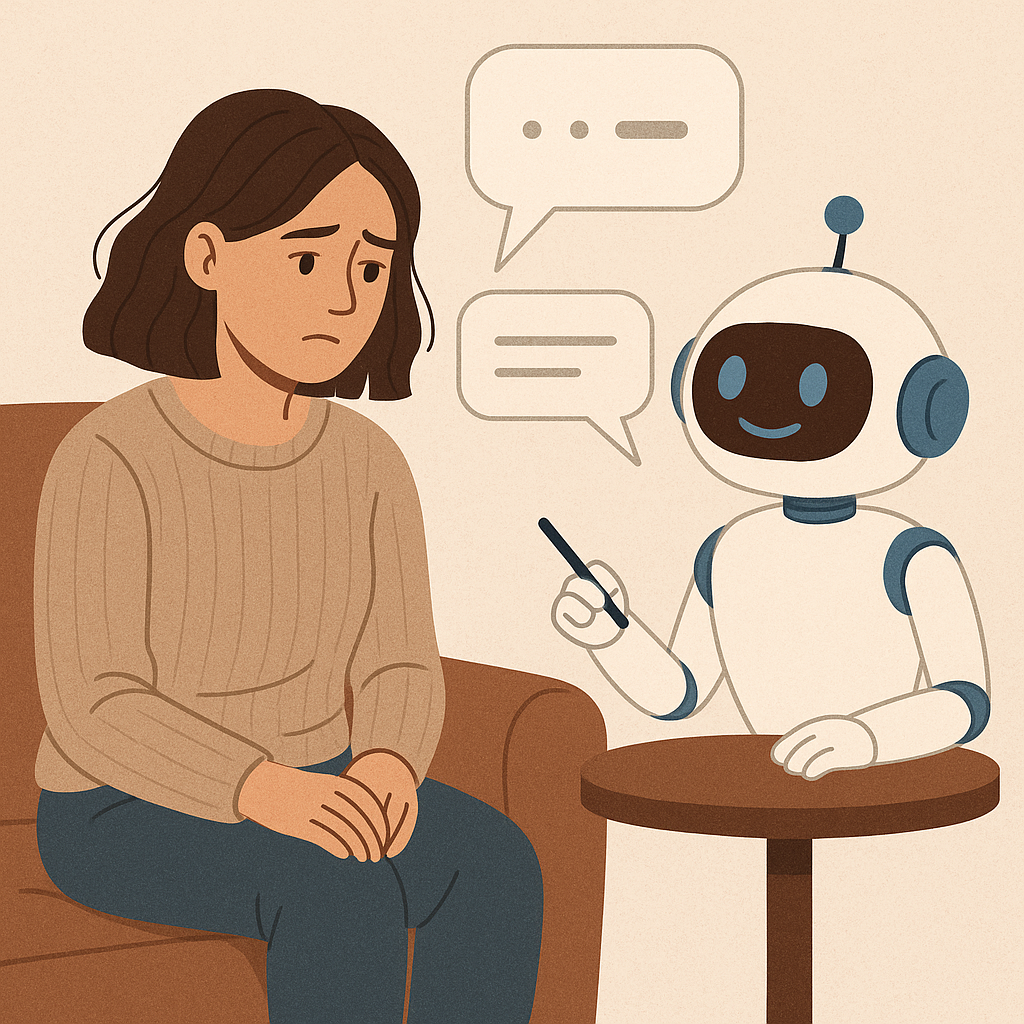

Can Chatbots Provide Real Mental Health Support?

In today’s fast-paced digital world, technology is becoming more deeply intertwined with our daily lives. From scheduling appointments to ordering groceries, we rely on it for convenience and efficiency. But can technology, specifically chatbots, help us navigate something as delicate and complex as mental health?

The short answer is: kind of—but with limits.

Chatbots designed for mental health support are on the rise. These are AI-powered programs that simulate human conversation to help users talk through feelings, track moods, or manage symptoms of anxiety, depression, and stress. They’re available 24/7, they don’t judge, and they offer instant feedback. For someone who’s struggling late at night or feels uncomfortable opening up to another person, that kind of accessibility can be a lifeline.

One of the most popular ways chatbots are used in mental health is for Cognitive Behavioral Therapy (CBT)-based techniques. Some bots prompt users to reframe negative thinking, journal about their emotions, or practice deep breathing. This kind of structured support can help users reflect and even adopt healthier thought patterns over time. For people new to therapy or looking for a little extra support between sessions, chatbots can be a helpful companion.

But while chatbots are useful, they are not a replacement for real, human therapy. That’s where things get tricky.

Mental health is complicated. It’s not just about mood tracking or offering pre-set coping strategies—it’s about building trust, unpacking deep-seated trauma, and understanding the unique, messy context of someone’s life. A chatbot, no matter how advanced, can’t pick up on subtle emotional cues, nonverbal behavior, or respond compassionately to a crisis the way a trained therapist can.

There’s also the matter of data privacy and trust. When someone shares sensitive mental health details with a chatbot, where does that information go? Is it secure? Could it be used for advertising or worse, shared without consent? These concerns are valid, and they highlight the importance of transparency and ethical design in mental health technology.

That said, chatbots do have an important role to play. In places where mental health professionals are scarce or where therapy is stigmatized, chatbots can be a starting point. They can encourage people to seek help, educate them about mental health conditions, and provide emotional support in moments of need. For young people especially—who are already accustomed to texting and digital interactions—chatbots can be a less intimidating first step toward self-care.

Another valuable use for chatbots is consistency. Unlike therapists with limited hours or long waitlists, chatbots are always available. You don’t need an appointment. You don’t have to wait. If you’re having a panic attack at 2 a.m. or you just need to talk, they’re there. Sometimes, that alone can make a big difference.

The future of mental health support likely involves a blend of technology and human care. Chatbots can handle light emotional check-ins, help people track their moods, and remind them to practice self-care techniques. Meanwhile, therapists can focus on the more nuanced, in-depth conversations that require empathy, intuition, and human experience.

In the end, mental health chatbots aren’t a magical fix—but they are a step forward. They open the door to greater accessibility, reduce stigma, and give people tools to manage their well-being. And while they may never replace human connection, they just might be the bridge that helps someone get there.

So yes—chatbots can provide real mental health support. Just not all of it. And that’s okay. Because sometimes, a small step is all it takes to start walking toward healing

If you or a loved one are struggling with addiction or mental health issues, please give us a call today at 855-952-3546